Kimia Afshari

ML Engineer

Software Engineer

Visual Perception

Kimia Afshari

ML Engineer

Software Engineer

Visual Perception

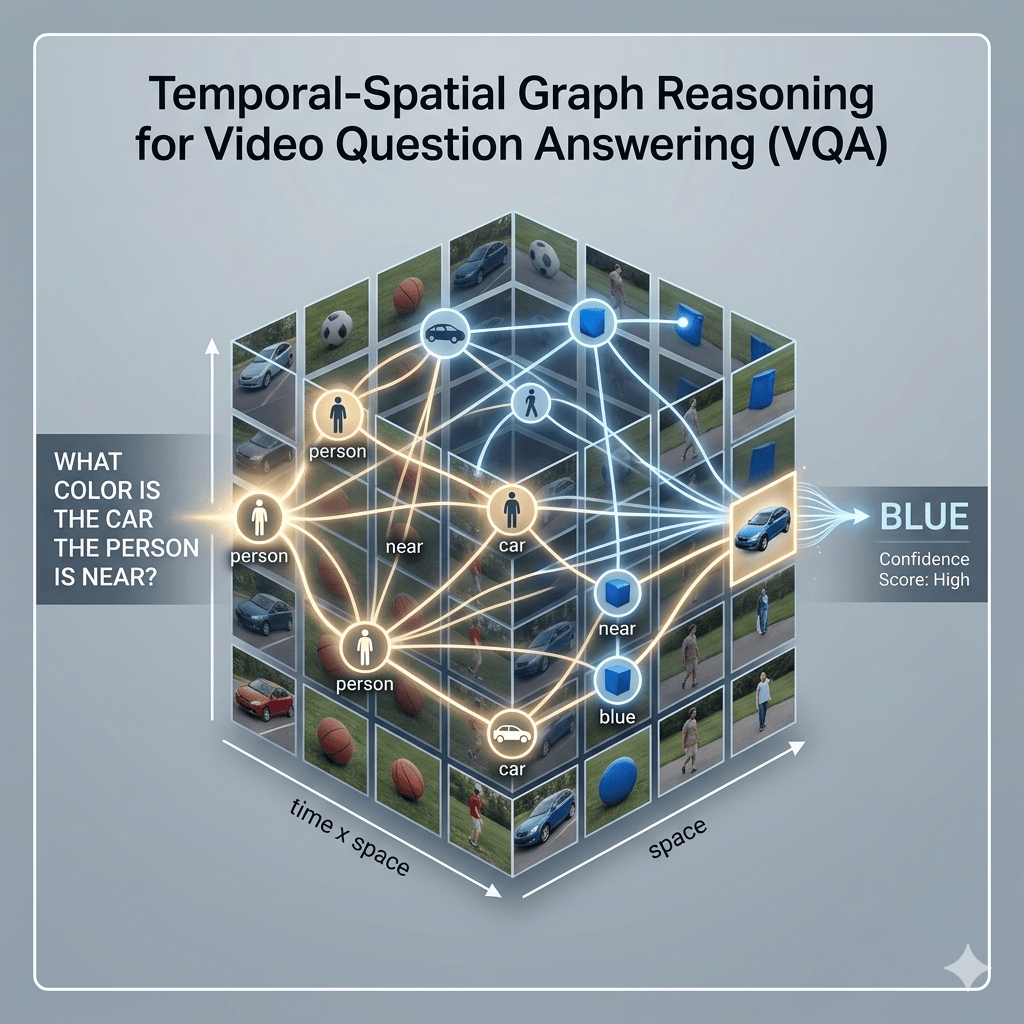

Spatial Video Grounding via Graph Transformers

- Role: Researcher

- Organization: University of California, Santa Barbara (UCSB)

- Date: 2024

- Focus: Vision-Language Models, Graph Transformers, Video QA